If we are to exploit the ever-larger volumes of scientific data at our disposal, not least to tackle climate change, we need to bypass the bottlenecks that limit our ability to gain insight from that data. Seagate Technology’s Nir Elron looks at examples of how this can be achieved

When we think of geoscience – the study of the solid earth, its waters and the air that envelops it – we think of geologists, conservationists, and oceanographers, perhaps dressed in white lab coats and scattered across the remote corners of the globe. Their work in trying to understand the impact of climate change (the effects of human activity on environments and natural cycles) is vital. But one crucial factor can severely hamper their progress: data.

According to the 2022 Global DataSphere forecast report by IDC, 221 ZB of data will be generated overall in the global datasphere by 2026. Tracking seismic shifts, monitoring changing bird migrations, and capturing other rapid changes in weather patterns, all create swathes of data that need to be recorded, processed, and stored. With data clearly fundamental to the geosciences, that figure poses a significant challenge.

Often, the data infrastructure struggles to keep up with quantities of information produced. More time is spent on waiting for data to be uploaded, downloaded, and moved, rather than acting on the insights it can provide. The data becomes a bottleneck when it should be a boon for researchers – especially in the face of climate change.

Adding to this, the management and processing of data in the rugged environments in which geoscientists operate adds unique challenges in keeping data secure, accessible, and of value. Scientists and technicians use all kinds of specialist technology to measure everything from soil composition to minute changes in the atmosphere – it's high time to up-level the sophistication of data management techniques, too.

The data challenge above and below the surface

The race is on to map the oceans by 2030, but this is a huge undertaking. By the National Ocean Service’s most recent count, barely 20% of the ocean is explored and just 5% is charted. Similarly, a recent study by Dalhousie University of Canada found that 86% of world’s living species have yet to be discovered. We are in a new age of discovery and data will be crucial in mapping these new undersea lands.

However, working in these environments presents unique challenges. Relying on innovations in telecommunications networks is not enough as the upload speed of data can take days, even on 5G, severely hampering the efficiency of geoscientific researchers. When it’s a question of terabytes at a time, such latency is simply unviable. Seagate’s Rethink Data report estimates businesses and institutions only activate and use 32% of their data. It represents a loss of value and critical information at a time when the stakes are so high.

This is where technology can really make a difference. It can speed up critical research with better processing power, aid human decision-making in emergencies, and help direct and focus conservation efforts. For example, at Seagate, we recently partnered with the Montana Space Grant Consortium, a NASA higher education programme and the Autonomous Aerial Systems Office in their work to collect and analyse climate data, including that related to wildfires.

Wildfires are an important natural process in many environments, but they are also dangerous and have grown in scale, intensity, and frequency in the last few years. With each fire event generating 12-14 TB of data, researching wildfire prevention creates an overwhelming amount of data. It is crucial work because real-time analysis is essential to guide evacuation, emergency responders, and firefighters. By providing a solution like a portable shuttle array, data bottlenecks are alleviated with immediate impact.

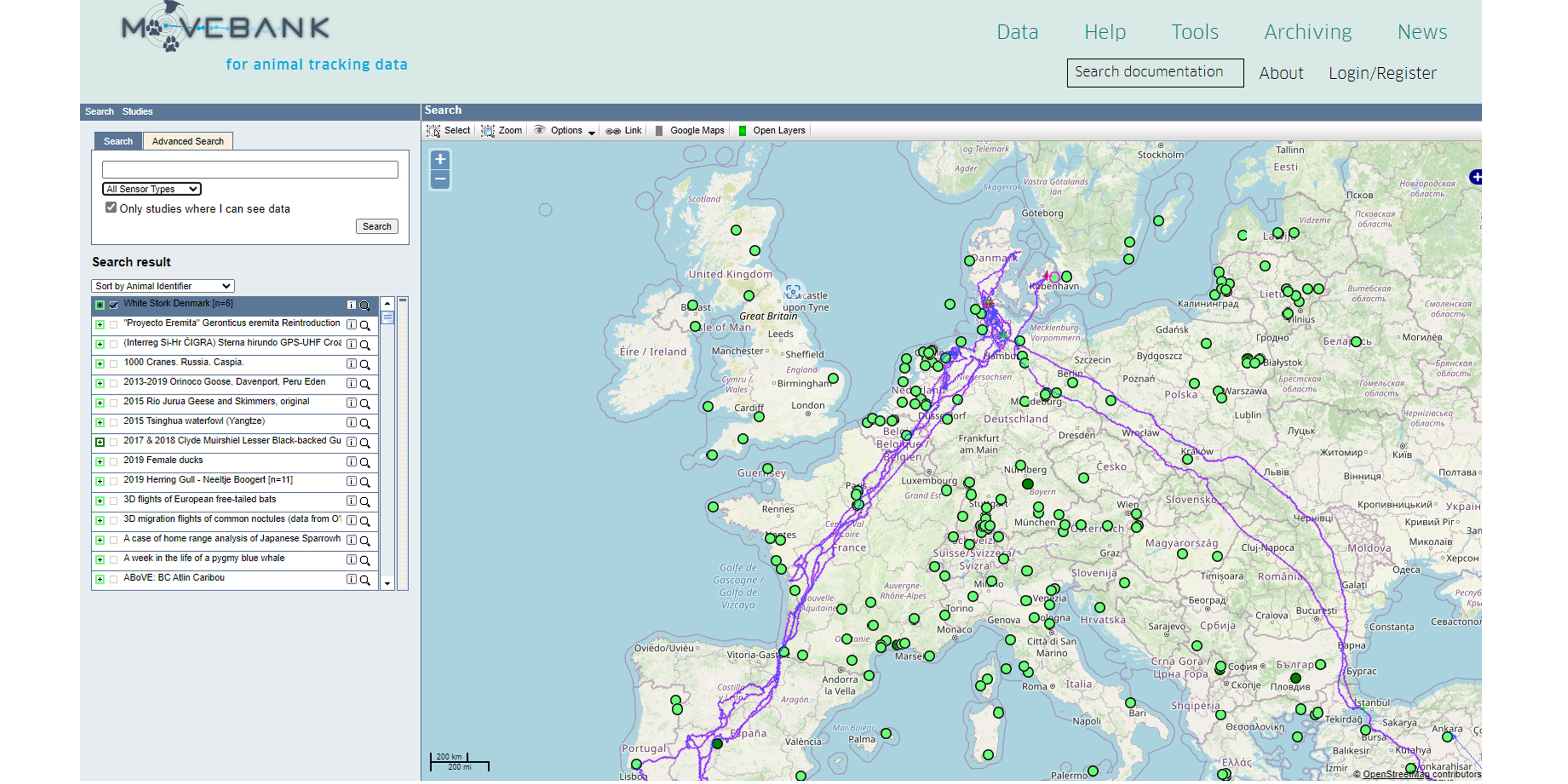

A similar project was undertaken by wildlife conservationists NC State University, where volumes of data gathered from GPS tags, camera traps, and other techniques were often left unanalysed because there was simply too much of it. In response to this, researchers created a dedicated software to manage animal tracking data, Movebank, that allows scientists to collect, manage, share, analyse, and archive their tracking data.

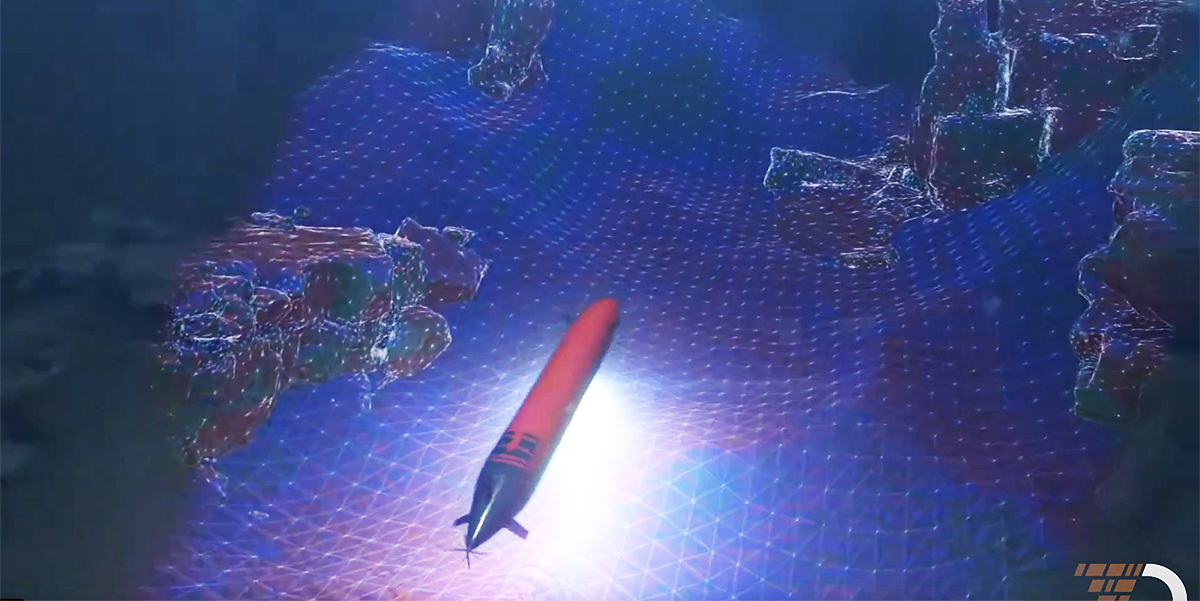

In the maritime sector, there are already some promising solutions from tech firms such as Terradepth. Collecting data at the edge – from the most remote oceanic locations – and then transferring it in bulk in a smart array to an on-premise access point means that it can then be immediately viewed and processed. Insights on ocean warming, where to conserve marine life, and where to build sustainable infrastructure are too important to be lost through operational oversight or left discarded in a hard drive.

The future is in the cloud

It’s clear data has real potential to help change the world – but only if it's managed properly. Whether it’s captured underwater or on the grasslands of Montana, the journey of data from the source to end-users must be fast and seamless to provide the most value. Cloud-based storage can be an essential part of making this happen, but we must ensure the path to the cloud during the transfer process lies unobstructed.

The technology investments in drones or unmanned underwater vehicles are impressive, but to get the most use out of that technology, the connecting data infrastructure within the geoscience sector must be just as robust and innovative.

Subscribe to our newsletter

Stay updated on the latest technology, innovation product arrivals and exciting offers to your inbox.

Newsletter